The Trillion-Dollar Apprentice: AI Agents and the Redesign of Enterprise Work

In a fourth-floor office in Hinjawadi, Pune, the fluorescent lights still burn past nine on the last day of the month, but the bodies beneath them have rearranged themselves in ways that would have been unrecognizable three years ago. The finance team at a mid-sized auto components firm — anonymized here, though the pattern is common enough to be representative — used to spend eleven days closing their monthly books. Eleven days of invoice reconciliation and anomaly hunting and the quiet dread of mismatched ledgers. Today an AI agent handles the reconciliation and flags the anomalies and drafts the preliminary close in four days, using a combination of rules-based matching and statistical anomaly detection running against their ERP system's transaction logs. The team has not shrunk. It has changed shape, the way a river changes shape when it encounters a new gradient. The accountants now spend their hours teaching the agent what "suspicious" means in the specific, idiosyncratic context of their vendor relationships — why a 12% invoice variance from a Nashik supplier in monsoon season is perfectly normal, while a 3% variance from a Chennai supplier in January is not. This is a strange new apprenticeship running in reverse, where the master teaches and the apprentice does, and the question that lingers like humidity in a Pune July is: what happens when the apprentice begins to understand things the master never said aloud?

The numbers suggest this question is not hypothetical. McKinsey estimates that generative AI could add $2.6 to $4.4 trillion in annual value across enterprise use cases — a figure that encompasses the full spectrum of generative AI, from code generation to customer operations, and not AI agents specifically. No authoritative source has yet isolated the agent-specific share of that value, though it is reasonable to expect agents will constitute a growing fraction as the technology matures; the title of this essay is an extrapolation, a wager on trajectory rather than a citation of settled fact. The number is so large it resists intuition, like trying to picture the volume of the Arabian Sea. And yet two-thirds of organizations have yet to reimagine their business processes around AI, leaving the deeper transformation unrealized even as surface-level productivity gains accumulate — the apprentice is willing and the workshop, by every measure, is not yet ready for what it can do.

What Arrived While We Were Watching the Chatbots

To understand the scale of what is arriving, one must first understand what has already arrived and how it differs from what came before. The chatbots of 2023 could answer questions and summarize documents, and the copilots of 2024 could suggest the next line of code, and each generation felt like a leap until the agents arrived and revealed the earlier tools as rehearsals — because an agent takes a goal and decomposes it into sub-tasks through planning loops and calls external tools through APIs and database queries and executes those steps across multiple systems and observes the results and re-plans when something goes wrong. The frameworks that enable this — LangGraph for stateful graph-based workflow orchestration, AutoGen for multi-agent conversation architectures, CrewAI for role-based agent team coordination — implement meaningfully different approaches to the underlying problem, and it is worth noting that robust re-planning on failure remains an active engineering challenge rather than a solved capability; most production agent systems require extensive fallback engineering and human escalation paths for non-trivial errors. An agent carries the autonomy of an apprentice trusted to run the shop while the ustad is away — and the distinction from a copilot is real, though it is better understood as a spectrum than a binary. Many 2025-era copilots have incorporated agentic capabilities like multi-step planning and tool use (GitHub Copilot Workspace and Cursor's agent mode among them), and the meaningful difference is often about the degree of autonomy and the scope of action — how many systems the tool can reach across, how long it can persist without human input, how much of the goal decomposition it performs on its own — rather than a categorical architectural divide.

IDC forecasts a tenfold increase in AI agent use by G2000 companies by 2027. IBM has described the emergence of what it calls "super-agents" — a term that, in IBM's usage, refers to multi-modal agents with persistent state that operate across browsers and editors and inboxes, a continuous intelligence woven through the fabric of daily work the way electricity is present in the walls of a building, invisible until you reach for it. (The term deserves more precision than it typically receives: in multi-agent systems literature, a super-agent usually means a meta-orchestrator that coordinates sub-agents, while IBM uses it more loosely to describe agents with broad, cross-application reach.) And here lies the central tension of this moment: 66% of organizations report productivity gains from AI, but only 34% have actually reimagined their business processes around it. The majority are asking the apprentice to sweep the same floors the old way, only faster.

In India, where a government survey reports 87% of enterprises employing AI in some form — a figure worth treating with particular care, since "employ AI" can mean anything from a single pilot project to full production deployment, and the underlying survey methodology and sample frame are not publicly accessible for independent scrutiny (the linked source is a PIB press release summarizing the survey, not the survey itself) — this gap between deployment and transformation is especially acute, because the stakes are especially high. Agentic AI adoption specifically is almost certainly far lower than that headline number suggests, and the distance between "we have an AI initiative" and "AI agents are reshaping our workflows" remains vast.

Where the Agents Actually Work

The temptation, always, is to speak of AI agents in the language of the spectacular — the autonomous lab assistant, the self-driving supply chain. But the trillion-dollar value hides in the mundane, in the workflows so routine they have become invisible. Industry reporting suggests growing adoption of agents for multi-stage workflows, though precise figures on what share of organizations have moved beyond single-task automation to true multi-step agent deployment remain difficult to pin down — while cross-functional, end-to-end deployment is rarer still, concentrated in the high-volume, unglamorous use cases like customer support and operations, where minutes saved multiply across millions of transactions.

And the bulk of agent adoption targets business process automation, which is worth pausing over. The popular imagination places AI in the developer's IDE, but the economic center of gravity sits in the back office — in procurement and invoicing and compliance and the endless, necessary choreography of keeping a large organization alive.

The results, where redesign has accompanied deployment, are striking. Aggregated industry reporting suggests automated finance processes are accelerating close cycles by 30–50%, though this figure is sourced through secondary aggregation rather than named primary studies, and the specific baselines and agent architectures vary widely. The opening anecdote in this essay — an eleven-day close compressed to four, a roughly 64% reduction — likely represents an outlier above that range, a case where the pre-existing process was particularly amenable to agent-driven redesign. An air carrier uses agents for real-time flight rebooking when disruptions cascade through a network. A manufacturer deploys them across the entire new product development lifecycle. Luma AI has automated the journey from creative brief to advertising campaign. Each of these is an apprentice that has graduated from sweeping floors to cutting fabric under supervision — and in some cases, managing an entire order.

The 2x-to-10x Gap: Why Redesign Beats Deployment

When you bolt an agent onto a broken process, you get broken results at speed — the same misallocations and redundancies, only now they arrive before anyone has time to catch them. Industry analyses suggest that agent-centric workflow redesign yields 2 to 10x productivity improvements compared to traditional AI implementation — that is, compared to bolting an agent onto a process designed for human hands and human rhythms. That range is wide enough to warrant caution: it is sourced from aggregated industry reporting without named primary studies or controlled methodologies, and the lower end applies to straightforward automation of well-structured tasks while the upper end reflects deep organizational redesign. It should be treated as directional rather than precise. But even the conservative figure represents a doubling that most enterprises have yet to pursue.

Think of it this way. In the old karkhanas of Lucknow and Moradabad, a master craftsman — the ustad — would take on apprentices who watched and imitated and gradually developed their own instincts. But the brilliance of the karkhana was the entire arrangement of the workshop — the sequence of tasks and the flow of materials and the unspoken protocols and the accumulated habits of decades that together determined who touched what and when. When a new apprentice arrived, the ustad did not merely hand them a tool and point at the workbench. The ustad reorganized the workshop around the apprentice's evolving capabilities, giving them more complex work as their hands grew sure, adjusting the rhythm of production to accommodate the new capacity. This is what workflow redesign means in the age of AI agents: redesigning the workshop around what the apprentice can do and will soon be able to do — rearranging the benches, resequencing the tasks, opening new rooms.

The Deloitte 2026 State of AI in the Enterprise survey reveals a telling pattern: enterprises consistently cite enhancing insights (53%) and reducing costs (40%) as their top goals for AI — figures reported via secondary aggregation of the Deloitte data, as the primary report's exact framing may differ. But these are outputs of redesign, not of deployment alone. The discipline that matters now is shifting from "prompt engineering" to what practitioners call "context engineering" — giving agents instructions and, crucially, the full operational context they need to act wisely: the history and the constraints and the unwritten rules and the institutional memory that no document captures.

In practice, this means building retrieval-augmented generation pipelines against enterprise knowledge bases, and defining structured tool-use schemas that tell the agent which APIs it can call and under what conditions, and designing workflow state management so the agent knows where it is in a multi-step process and what has already been decided — and, critically, enforcing role-based access controls at the right architectural layer. This last point deserves a moment of specificity, because the difference between prompt-level access control and middleware-enforced access control is the difference between a suggestion and a wall. A prompt-level instruction — "you do not have permission to access payroll data" — can be bypassed through prompt injection, through adversarial inputs that rewrite the agent's understanding of its own constraints. Middleware-enforced access control, where the agent's API calls are intercepted and validated against a permission schema before execution, is architecturally sound in a way that prompt-level controls are not. For any enterprise deploying agents against sensitive data — financial records and customer information and personnel files — the enforcement layer must sit outside the agent's reasoning loop, not inside it.

Gartner projects that over 80% of enterprise finance teams will use AI-driven automation by the end of 2026 — though this figure reaches us through secondary aggregation rather than a direct Gartner publication, and it is worth noting that "AI-driven automation" is a broader category than agentic AI, encompassing traditional RPA with machine learning and rules-based automation with AI classification. Automation without redesign is just faster bureaucracy, and faster bureaucracy is still bureaucracy.

India's Particular Wager

I think often about the peculiar position India occupies at this inflection point. India brings to this moment a technology workforce of several million — built over two decades of global outsourcing — and the IndiaAI Mission's allocated funding (widely reported as ₹10,300 crore, though readers should verify against the latest government communications, as budget figures have been cited differently across sources) as foundational capital, and growing industry projections that the country will need well over a million AI professionals within the next few years, and emerging multilingual foundation models like BharatGen that aim to support Indian languages as the substrate for agents that can operate in India's full linguistic reality — governance agents in Tamil and agricultural advisory agents in Marathi and supply chain agents in Hindi.

But the nature of what "AI professional" means is shifting beneath our feet. The workers India needs will be engineers writing model architectures and, in far greater numbers, agent orchestrators: people who understand a business process deeply enough to redesign it around autonomous agents, and who understand the agents deeply enough to know where they need guardrails and where they can run free. This is the ustad's skill translated into the digital age — the wisdom to organize the workshop so that every hand, human and digital, finds its proper task.

For Infosys and TCS and Wipro, the transformation is existential. They built empires on executing processes for global clients — and they are not standing still: Infosys Topaz and TCS's AI.Cloud and Wipro's ai360 already signal the pivot toward AI-native service delivery. But the question is whether platform plays and agent-as-a-service offerings can replace the labor-arbitrage model at equivalent scale, and that question remains genuinely open. Now they must evolve into organizations that build and manage autonomous agents executing those processes — the apprenticeship metaphor made industrial, at a scale the old ustads of Moradabad could never have imagined. And for Make in India, the implications are equally profound: AI agents automating complex manufacturing and supply chain workflows represent the convergence of India's factory floor with Silicon Valley's algorithms. The question now is how to architect — adoption is already underway, already irreversible. India must build and own the agent ecosystems, because the difference between owning the karkhana and renting a bench inside it compounds across decades — the owner's choices sediment into the craft itself, into the training and the standards and the institutional memory that outlasts any single generation of tools.

The Kill Switch and the Question of Trust

Every apprentice, no matter how gifted, must operate within bounds. And here the metaphor of the karkhana carries its deepest tension, because in the traditional workshop, the guild's rules determined what an apprentice was and was not permitted to do unsupervised — which materials they could handle and which clients they could meet and which tools they could sharpen alone and which decisions required the ustad's nod before proceeding.

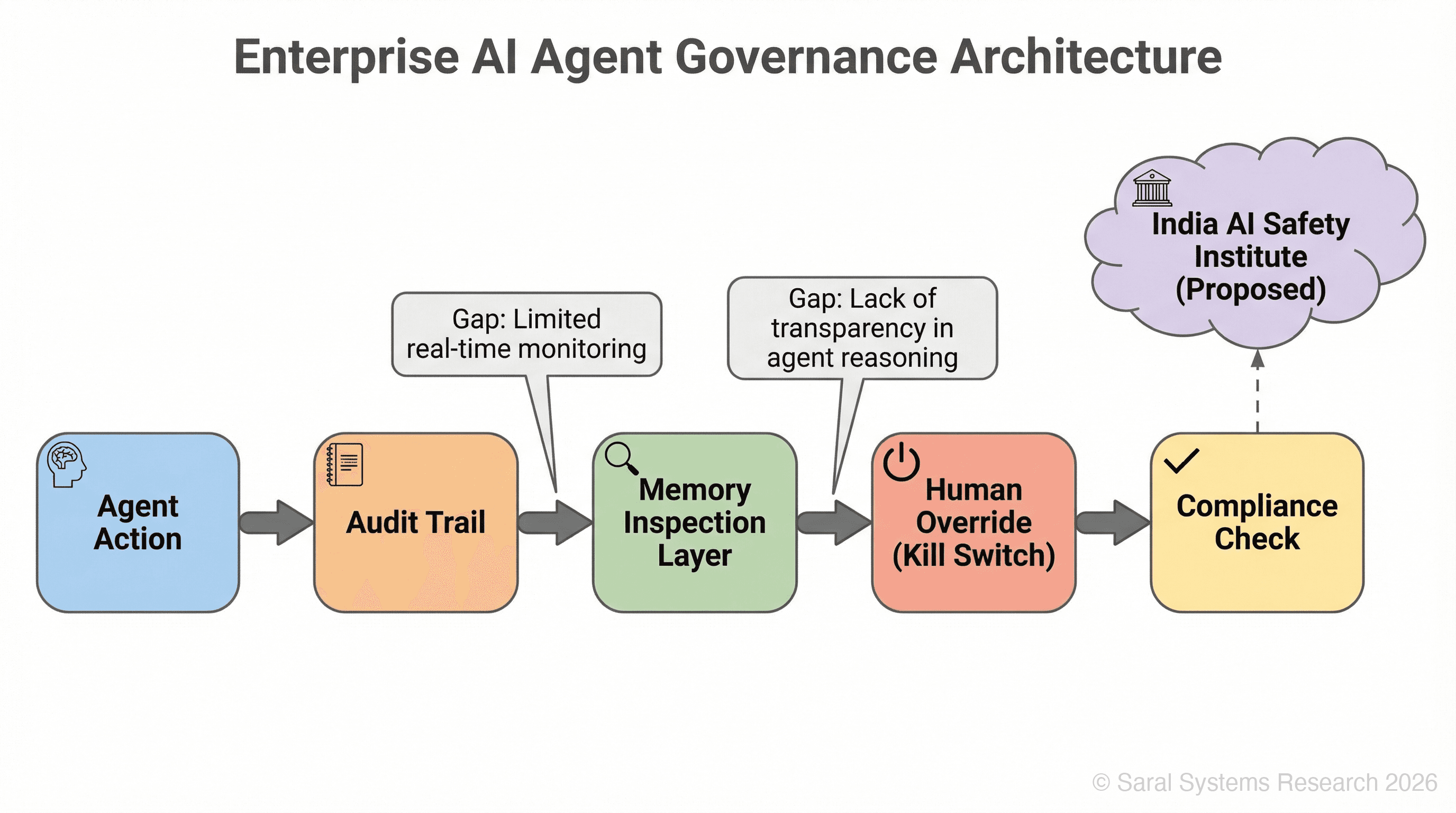

A recent MIT study on AI agent systems found significant gaps in monitoring, transparency, and reliable stop controls across widely deployed platforms — though this is sourced through secondary reporting rather than the primary MIT research, and the specific systems studied and the precise definitions of "basic monitoring" (whether logging, observability dashboards, or full audit trails) deserve more detailed examination than the secondary source provides. Readers seeking to evaluate the study's scope and methodology should locate the primary MIT publication directly. The "kill switch" problem is harder than it sounds: halting a specific agent without shutting down an entire interconnected system is like trying to stop one loom in a textile mill without tangling every thread. Meanwhile, Google has open-sourced its "Always On Memory Agent" — agents that remember across sessions, that build a persistent understanding of context and preference and history. This is powerful and deeply unsettling in equal measure, because an agent that remembers everything raises the question of what it should be allowed to forget, and who decides.

India's AI Governance Guidelines have recommended the establishment of an AI Safety Institute, and I believe this is among the most important institutional proposals of our time — because it signals the intention to build governance frameworks before the crisis rather than after it, and that sequencing, that insistence on foresight over reaction, may matter more than any specific regulation it produces. The trust equation for Indian enterprises is especially fraught: agents handling financial data and customer information and government records must meet standards that do not yet fully exist, and the organizations that build those standards will shape the industry for a generation.

Continuous memory will be judged on governance — whether it is bounded and inspectable and safe — as much as on capability. An apprentice who remembers everything and operates without oversight is dangerous in proportion to their capability, and these agents are becoming very capable very quickly. The problem is technological and political at once, indivisible, the way a river is both water and current simultaneously. We must govern what we build, and build only what we can govern — and the gap between those two imperatives is where the hard institutional work of the next decade lives.

When the Apprentice Outgrows the Bench

The trajectory from single-task agents to cross-functional super-agents changes the nature of human work in ways that resist neat summary — the human role becomes less about execution and more about judgment and orchestration and the slow, unglamorous labor of deciding what the agents should care about and what they should ignore and how to evaluate outcomes that no one has seen before. For India specifically, the question is how to architect the ecosystems that will define this era, because the difference between owning the karkhana and renting a bench inside it is not a matter of a single quarter's revenue but of decades — the owner's choices about training and standards and tool design sediment into the craft itself, into the institutional memory that shapes what the next generation of builders considers possible and normal and worth pursuing.

At Saral Systems, we think about this daily. We think about the AI professionals India needs, and we see people who must be engineers and guild masters and translators between worlds all at once, people whose job descriptions do not yet exist in any HR taxonomy — people who carry within them the technical understanding of what an agent can do and the human understanding of what it should do and the harder, less articulable sense of where those two kinds of knowledge grind against each other.

And I return, as I often do in the evenings, to that finance team in Pune. Consider a scenario that is becoming increasingly common across enterprises running agent-based reconciliation systems: the agent begins flagging a pattern in vendor payments that no human had explicitly noticed — a seasonal clustering in certain procurement categories that suggests renegotiation leverage worth several crore annually. Technically, this is anomaly detection and temporal clustering rather than emergent reasoning — the agent is surfacing statistical regularities in the data that human analysts, overwhelmed by volume, had never aggregated in quite this way. The team lead stares at the screen, her chai growing cold. She did not teach it this. No one taught it this. The apprentice, it seems, has begun to notice things on its own — patterns latent in the data the way monsoon is latent in a June sky, present and gathering and not yet fallen.

When the apprentice starts teaching the master, the old vocabulary of the karkhana offers no word for it — and the team lead in Pune, her chai now fully cold, the fluorescent lights still burning above her, is living inside that namelessness, inside the space where the language has not yet caught up to what is happening on her screen.