The Lakshman Rekha in Code: The JVG Algorithm and Every Digital Boundary India Has Drawn

The green checkmark arrives almost instantly. A chai vendor in Varanasi holds his phone at an angle against the morning light, and the UPI confirmation glows like a small benediction — ₹30 received, the transaction sealed, the trust complete. His customer has already turned away, lifting a clay kulhad to her lips, and neither of them pauses to consider the invisible architecture that made this moment possible: the mathematical boundary, drawn from the difficulty of factoring very large prime numbers, that guards this payment and every other payment and every biometric identity and every digitally signed document flowing through the arteries of the world's largest digital public infrastructure. That boundary rests on RSA and its cousins in elliptic-curve cryptography, and for three decades these have been the quiet, faithful guardians of the modern internet.

In early 2026, a specific line of research — the JVG algorithm, announced on March 2, 2026 by the Advanced Quantum Technologies Institute (AQTI) — suggested that the boundary can be redrawn, by an adversary, and with a machine far smaller than anyone had imagined.

I have spent years at Saral Systems working at the intersection of deep technology and national capability, and I confess that when reports emerged of this hybrid classical-quantum approach to factoring RSA keys — one requiring dramatically fewer qubits than Shor's algorithm assumed — the feeling it produced was something closer to vertigo, the sensation of watching a line you had believed inviolable begin to shimmer and thin at its edges, the Lakshman Rekha itself losing opacity, the boundary you had trusted growing translucent under a new and unfamiliar light.

The Thousand-Fold Collapse

The comfort that preceded this moment had been decades in the building.

In 1994, the mathematician Peter Shor presented an algorithm — first at FOCS 1994, with the journal publication following in 1997 — demonstrating that a sufficiently powerful quantum computer could factor large integers superpolynomially faster than the best known classical algorithms. To be precise: the best classical factoring method, the General Number Field Sieve, runs in sub-exponential time, while Shor's algorithm runs in polynomial time, making the speedup dramatic even if it is not a clean exponential-versus-polynomial separation. The cryptographic community absorbed this news with something between alarm and equanimity, because "sufficiently powerful" meant a machine requiring an estimated one million to twenty million physical qubits, and the most advanced quantum processors of the 2020s operated in the range of a few hundred. The threat occupied that peculiar zone of acknowledged reality and practical irrelevance — a locksmith's quiet awareness that every lock can theoretically be picked, held comfortably alongside the knowledge that no one alive possesses the tools to do so.

The JVG algorithm and the new class of hybrid classical-quantum approaches it represents collapse that comfortable distance by what may be a factor of a thousand.

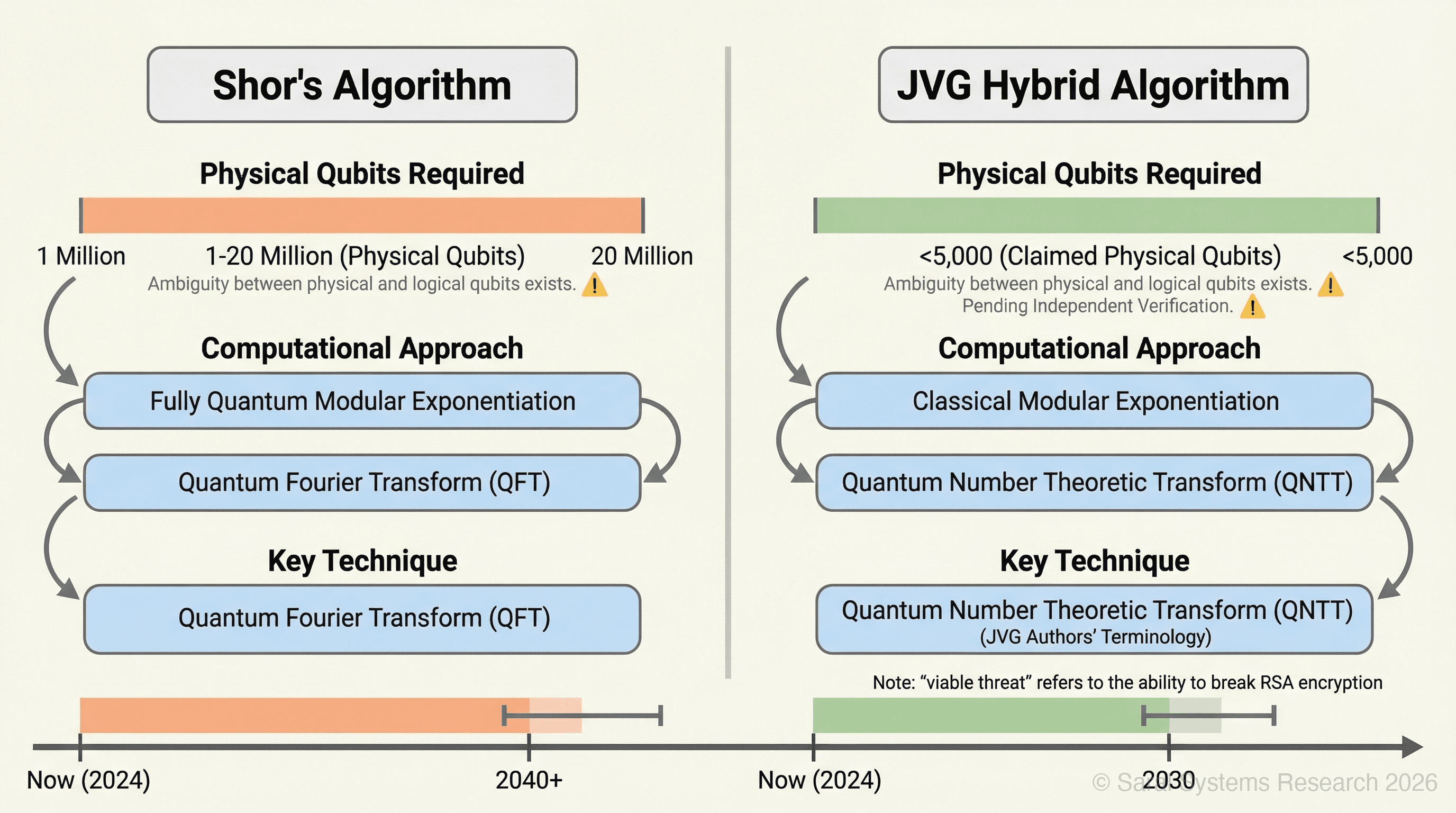

Where Shor's approach demands a fully quantum computation — including the quantum Fourier transform, a notoriously noise-sensitive operation requiring enormous qubit overhead — the JVG algorithm proposes a hybrid classical-quantum decomposition with two key innovations. First, it offloads modular exponentiation — the arithmetically intensive core of the factoring computation — to classical co-processors. Second, it replaces the quantum Fourier transform with what the JVG authors term a quantum number theoretic transform (QNTT), which operates over finite fields rather than complex numbers. The JVG authors claim that QNTT is more noise-tolerant and requires fewer qubits than the quantum Fourier transform, though independent verification of these claims is pending and the term QNTT itself is the authors' own terminology rather than an established construct in the mainstream quantum computing literature. The theoretical claim is that breaking RSA-2048 — the encryption standard protecting most of the world's critical infrastructure — may require fewer than five thousand qubits.

That five-thousand figure originates from the AQTI announcement as reported in SecurityWeek's summary of the JVG algorithm; the primary research paper should be consulted directly for the precise derivation, and as of this writing no independent replication has confirmed the headline qubit reduction. Set beside the millions once assumed necessary, the claim — if it survives peer review — collapses the timeline from geological to imminent.

Several important caveats must be stated plainly, because a deep-technology lab owes its readers precision rather than drama. First, the five-thousand figure requires careful scrutiny: whether it refers to physical qubits or logical qubits changes the practical meaning by orders of magnitude, since each logical qubit may require hundreds or thousands of physical qubits for error correction. Second, the claim that classical offloading of modular exponentiation preserves the quantum speedup demands rigorous justification — in standard Shor's algorithm, modular exponentiation is performed on the quantum processor as part of the superposition-based computation, and the JVG authors must explain precisely how offloading this step to classical hardware preserves the quantum advantage that makes factoring tractable. The JVG approach appears to argue that the quantum advantage resides primarily in the transform step rather than the modular exponentiation, but this is a non-trivial claim that the cryptographic community is still evaluating. Third, the noise-tolerance claims for QNTT over QFT require substantiation in terms of concrete circuit depth comparisons and gate-error propagation analysis for equivalent problem sizes. The peer-review process for these claims is ongoing.

I state these caveats not to dismiss the research but to frame it honestly: even a partial reduction in qubit requirements — say, by a factor of ten rather than a thousand — would dramatically accelerate the threat timeline, and the cryptographic community is treating these results with appropriate urgency.

Consider the current landscape: IBM's Condor processor reached 1,121 superconducting transmon qubits in December 2023, though it was designed as a manufacturing and packaging milestone rather than a computational one — its two-qubit gate error rates of approximately 1% place it far below the fidelity required for any cryptographic computation, and the gap between its qubit count and the JVG threshold is not a simple arithmetic distance of roughly 4x but a chasm measured in gate fidelity and coherence time and error-correction overhead. IBM's strategic direction has since pivoted toward modular architectures with processors like Heron (133 qubits) that emphasise qubit quality and connectivity over raw count. Microsoft's recent advances in four-dimensional quantum error correction codes are pushing logical qubit fidelity toward the thresholds needed for useful computation. Industry roadmaps from IBM and Google and Microsoft target tens of thousands of qubits — physical and logical, in modular architectures — over the coming decade.

But qubit count alone is a misleading metric, and an honest assessment of the threat timeline must reckon with the gap between having N qubits and having N qubits capable of sustaining a deep quantum circuit. Current two-qubit gate error rates hover between 0.1% and 1%, coherence times remain limited, and connectivity topologies constrain the circuits that can actually be executed. A five-thousand-qubit processor that cannot maintain coherence through the circuit depth required for cryptographic attack is not, in practice, a cryptographic threat. The real threshold is not a qubit number but a combination of qubit count and gate fidelity and coherence time and circuit depth — and that combined threshold, while approaching faster than the comfortable estimates of five years ago, remains a moving and uncertain target.

What is not uncertain is the direction of travel. And the mathematics that guard a chai vendor's ₹30 transaction and the mathematics that guard a nation's strategic communications are, at their root, the same family of mathematics. And that family has become, for the first time, practically rather than merely theoretically vulnerable.

The Lakshman Rekha, Exposed

In the Ramayana, Lakshman draws a line around Sita's dwelling — the Lakshman Rekha — a boundary sacred and inviolable, powerful not because of the material from which it was made but because of the faith invested in its protection, a line that no force, it was believed, could ever cross. India's digital public infrastructure is its own Lakshman Rekha: a boundary drawn in mathematics rather than myth, encircling the identity and wealth and governance of 1.4 billion people, and powerful precisely because we have believed — with good empirical reason, until now — that no adversary could cross it.

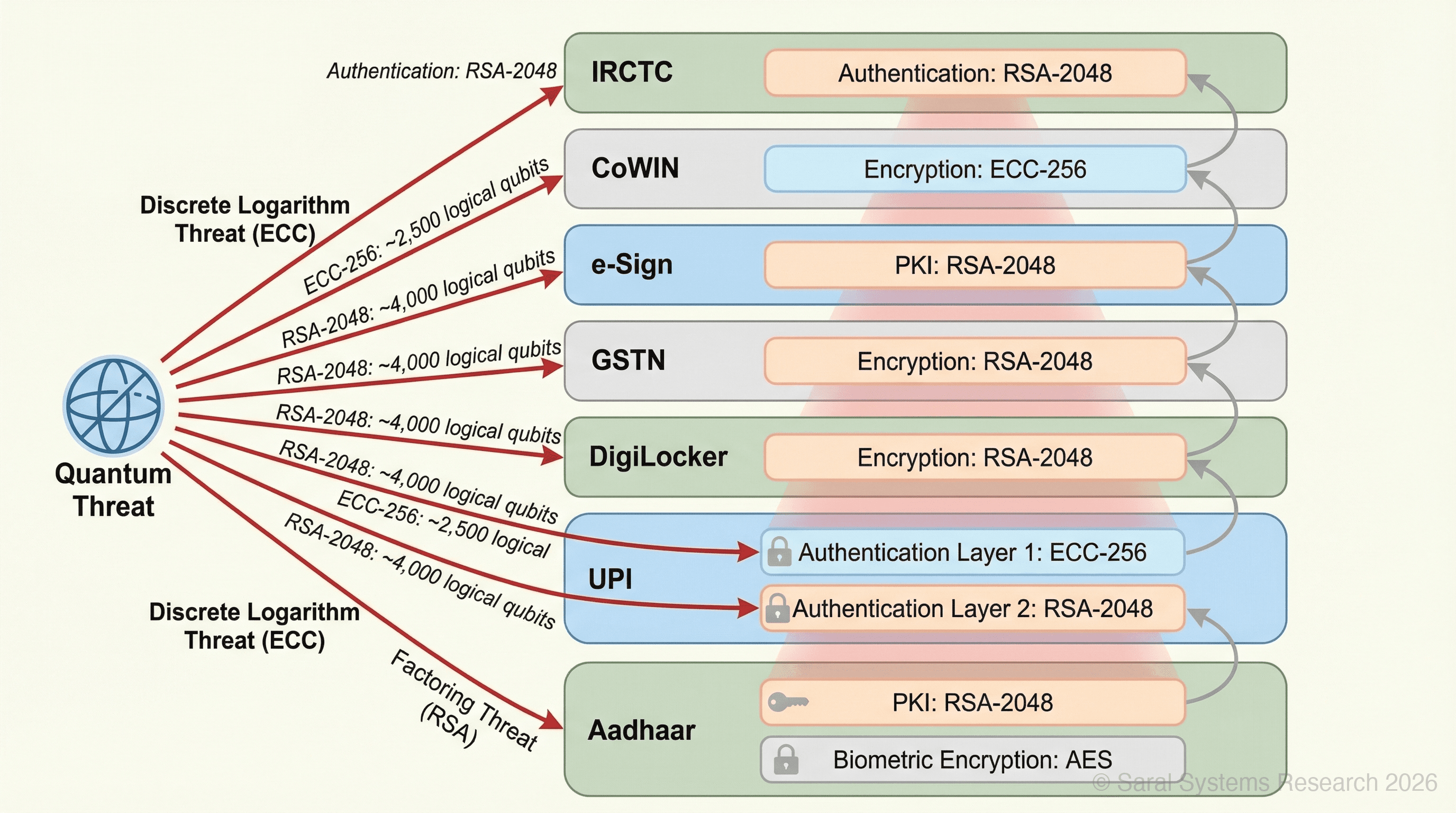

The India Stack is, by any measure, the most ambitious digital public infrastructure ever constructed by a democracy. Aadhaar holds 1.4 billion biometric identities secured by a layered cryptographic architecture that, based on publicly available UIDAI documentation and authentication API specifications, includes RSA-2048 in its PKI infrastructure for digital signatures and biometric packet encryption, alongside AES for symmetric encryption and hardware security modules for key management — though the full cryptographic architecture is not publicly disclosed, and the specific implementation details described here reflect what can be inferred from available technical documents rather than a comprehensive internal audit. Multiple layers exist, but with RSA embedded deeply enough that its compromise would propagate through the system's trust chain. UPI processes billions of transactions per month, each authenticated through a layered security model involving device binding and MPIN and transport-layer encryption and cryptographic digital signatures — a model in which both RSA and ECC play structural roles at different points in the authentication chain. ECC's vulnerability to Shor's algorithm for the discrete logarithm problem presents a distinct but parallel quantum threat — and one that may be more immediately dangerous, since estimates from Roetteler et al. (2017) suggest that breaking ECC-256 via Shor's algorithm requires roughly 2,500 logical qubits compared to approximately 4,000 for RSA-2048, making ECC the potentially softer target in a quantum scenario. DigiLocker and GSTN and e-Courts and IRCTC and CoWIN and e-Sign — every layer of governance and commerce and health and daily civic life assumes, at its foundation, that RSA and ECC are unbreakable by any machine that exists or could exist in the near term.

The hybrid quantum algorithms are the moment we learn that the Lakshman Rekha was never absolute but was always a function of the adversary's reach — and the reach is growing.

What makes India's position uniquely consequential is the concentration of its cryptographic dependency — and this concentration is a direct consequence of the India Stack's greatest strength. Where American digital identity is scattered across state DMVs and private credit bureaus and corporate SSO systems — a fragmentation that, for once, functions as a kind of accidental resilience — India's Aadhaar concentrates the cryptographic dependency so that a single failure cascades simultaneously into banking authentication and tax filing and health records and court documents and railway bookings. The architecture that makes the India Stack so elegant and so powerful — its unified, interoperable design — is the same architecture that demands a correspondingly unified and ambitious cryptographic defence. The world's most sophisticated digital public infrastructure requires the world's most sophisticated cryptographic migration, and the ambition of the one must match the ambition of the other.

And there is a threat that does not require waiting for any qubit threshold at all. Intelligence agencies and sophisticated adversaries may already be engaged in what cryptographers call "harvest now, decrypt later": intercepting and storing encrypted Indian government communications and financial data and diplomatic cables today, with the patient confidence that quantum capability will arrive in time to unlock them. The secrets flowing through Indian networks this morning may be read by adversaries in 2030, and there is no retroactive remedy for that exposure.

The Global Scramble and India's Position

The world is not standing still. The US National Security Memorandum NSM-10 (2022) and the subsequent OMB Memorandum M-23-02 established concrete post-quantum cryptography migration timelines for US federal agencies, mandating an inventory of cryptographic systems and a prioritised transition plan. The 2026 National Defense Strategy reinforces this direction by mandating a transition to data-centric security — a framework within which, in this author's assessment, PQC migration functions as a central operational requirement, though the NDS document itself frames the mandate in terms of data-centric security rather than naming PQC explicitly. (Note: the linked source is a GovExec portal summary; the primary NDS document should be consulted for the precise language of the mandate.) NIST finalised its first post-quantum cryptographic standards in August 2024: ML-KEM (FIPS 203, originally developed as CRYSTALS-Kyber) for key encapsulation and ML-DSA (FIPS 204, originally developed as CRYSTALS-Dilithium) for digital signatures, and US federal agencies now operate under the migration deadlines established by NSM-10 and M-23-02.

India's response has been substantial in ambition and budget. The National Quantum Mission, approved with a budget of ₹8,000 crore (approximately $950 million), spans quantum computing and communications and sensing and materials. The Quantum Communications vertical is directly relevant — it includes the development of quantum key distribution (QKD) networks that could, in principle, offer information-theoretically secure communication channels and immunity to quantum attack.

But ambition and deployment are separated by a vast and consequential distance. India has, as of this writing, no announced federal PQC migration timeline equivalent to the US mandate. A review of publicly available guidance from CERT-In and NCIIPC and STQC and RBI and SEBI reveals no published advisories or circulars specifically addressing post-quantum cryptographic readiness for India's critical digital infrastructure — and the absence of such guidance from the agencies best positioned to issue it is itself a data point that underscores the gap between research investment and operational migration planning. The National Quantum Mission has been funded and announced, and yet no public document describes when or how Aadhaar's RSA-2048 keys will be replaced, or which agency owns the sequencing of that replacement, or what happens to UPI's cryptographic signatures in the interim, or how DigiLocker and GSTN and e-Sign will be audited and transitioned. The distance a locksmith must travel between understanding that every lock in a billion-door building has been compromised and finishing the last rekey while tenants pass through every door every day — that is the distance between a research mission and an infrastructure migration.

A nation that draws its Lakshman Rekha in mathematics must also redraw it in mathematics — and the redrawing is always harder than the drawing, because 1.4 billion people must continue to live and transact and trust within the old boundary while the new one is being inscribed.

The Bridge Builders: From Labs to Lakshman Rekha

The question that remains is whether the new line can be inscribed — in lattice-based mathematics rather than prime-number mathematics — before the old one is crossed, and whether the inscription can proceed fast enough across a continent-sized architecture. And across India, the bridge builders are already at work.

IISc Bangalore's Centre for Quantum Information and Quantum Computing is conducting foundational research in quantum algorithms and post-quantum cryptography — the theoretical bedrock upon which any migration must rest. IIT Madras and IIT Bombay host quantum labs working on hardware simulation and algorithm development. QNu Labs, based in Bengaluru, has emerged as India's first quantum-safe security company, developing both QKD hardware and PQC software solutions — though deploying these at India Stack scale requires a fundamentally different pace and ambition than serving enterprise clients.

IBM's quantum network partnership with Indian institutions provides researchers access to processors exceeding 100 qubits, enabling the kind of experimental work that turns theoretical PQC standards into practical implementations. And a development reported in early March 2026 may prove consequential for the operational side of quantum computing: IBM demonstrated that quantum error correction decoding algorithms can run on conventional AMD chips. To be precise about what this means: QEC requires a classical control plane that decodes error syndromes in real time, and demonstrating that conventional processors can handle this decoding workload is essential for operating error-corrected quantum computers at scale. This does not replace the need for quantum hardware — Indian labs still need access to actual quantum processors for algorithm development and testing — but it means that the classical infrastructure supporting QEC can be built on commodity hardware, lowering one barrier to India's participation in the error-correction race.

The hardware market, too, is responding. Forward Edge-AI's Isidore Quantum data diode, designed to protect sensitive operational technology endpoints with quantum-resistant encryption, signals that the commercial ecosystem is no longer waiting for the threat to arrive before building defences.

But the real challenge — the challenge that keeps me awake at Saral Systems — is migration. Retrofitting post-quantum cryptography into systems that serve 1.4 billion people, systems that process billions of transactions a month, systems that cannot go offline for a weekend while the boundary is redrawn — this is an engineering and governance problem of a scale that has no precedent. The new Lakshman Rekha must be inscribed while 1.4 billion people continue to live and transact and trust within the old one.

The Clock and the Calendar

Let me be honest about the timeline, because honesty is the only useful posture when the stakes are civilizational.

Current leading quantum processors operate at approximately 1,000 to 1,200 physical qubits, though the computational utility of these processors — measured in achievable circuit depth and gate fidelity and coherence time — remains far below what cryptographic attacks would require. Based on published roadmaps from IBM and Google and Microsoft — and accelerated by breakthroughs like Riverlane's 2026 quantum error correction predictions and Microsoft's four-dimensional error correction codes — processors with five thousand or more physical qubits may exist between 2028 and 2032, based on this author's reading of industry roadmaps and current scaling trajectories. But the arrival of five thousand physical qubits and the arrival of cryptographically useful computation are two different milestones separated by an uncertain interval: the gate fidelity requirements (below 0.1% two-qubit error) and coherence time requirements (sufficient for circuit depths in the tens of thousands of gates) and error-correction overhead may add years beyond the qubit-count milestone, or the gap may compress rapidly if error-correction advances continue accelerating in the ways that have surprised even their practitioners. IBM's own roadmap already targets 100,000+ qubits by 2033, suggesting that raw qubit count is not the binding constraint — quality is. The date could slip later if engineering obstacles prove stubborn, or it could lurch sooner if another algorithmic breakthrough further compresses the qubit requirement, and neither possibility is remote enough to plan around.

But the "harvest now, decrypt later" threat means the clock has already started for data with long-term sensitivity — defence communications, diplomatic cables, financial records, biometric templates. The encrypted data transmitted today has a shelf life measured in decades, and the adversary's patience is measured in the same units.

The essential design principle for the years ahead is what cryptographers call crypto-agility: architecting systems so that encryption algorithms can be swapped without rebuilding the entire stack. Crypto-agility means building the India Stack so that its encryption algorithms can be replaced the way a living body replaces its cells — continuously, without ever leaving the organism unprotected during the renewal, each new cryptographic primitive tested and load-bearing before the old one is retired, the lattice-based algorithms running alongside the prime-number algorithms until the transition is proven and the old layer can finally be shed. A crypto-agile India Stack could transition from RSA to ML-KEM to whatever comes after ML-KEM without the catastrophic re-engineering that a monolithic design demands.

India's window is three to five years — to audit every cryptographic dependency in the India Stack and publish a federal PQC migration roadmap and begin the painstaking, line-by-line work of replacing the cryptographic primitives across a billion-user system and test each new implementation under adversarial conditions before the old one fails. The cost of delay is not theoretical. It is measured in the retroactive exposure of every transaction and identity and secret transmitted between now and the day the migration is complete.

Somewhere in Varanasi, the chai vendor's phone pings again. Another ₹30, another green checkmark, another small act of faith in an architecture he has never seen and does not need to understand. The transaction completes in seconds, and the morning continues, and the chai steams in its clay cup, and the Ganga flows behind him as it has flowed for millennia, carrying no opinion about the mathematics that guard his livelihood or the mathematics that may soon undo those guards. The Lakshman Rekha was drawn in code, and it held because the mathematics behind it outpaced every adversary's reach — and now the reach is lengthening, and the mathematics must lengthen with it, and the hands doing the redrawing must work faster than the hands probing the old line for weakness.